GPU vs CPU: The Processors Behind Everything You Do

At the heart of every server and operating system sits the central processing unit (CPU): the engine driving the core computing tasks and logical functions that keep operating systems and applications running smoothly.

Where CPUs are generalists, graphics processing units (GPUs) are specialists. Built to handle complex mathematical operations across thousands of cores simultaneously, they excel at parallel workloads. That capability has made them the engine behind modern AI. Training and running AI models requires massive amounts of matrix math processed at speed, which is exactly what GPUs are designed for. Today, they power everything from image generation to large language models, making them as central to AI infrastructure as they are to graphics.

Understanding the differences between CPUs and GPUs matters more than ever, as both play a critical role in how modern systems perform across a wide range of applications. Though both are silicon-based microprocessors, they're built for different jobs.

This blog delves into the intricate details of processing units, exploring their core components, key differences, and real-world applications.

CPU vs. GPU: What's the difference?

At the heart of every computer are two kinds of microprocessors, built for different kinds of work: the CPU and GPU (graphics processing unit).

Meet the CPU

The CPU is the foundation of any computing system. Built from millions of transistors organized into multiple processing cores, it fetches instructions from RAM, decodes them, and executes them — handling everything from running your operating system to managing complex spreadsheets. Fast, flexible, and essential, it's the processor you rely on for tasks that require quick, versatile thinking.

Enter the GPU

Originally designed to render graphics, the GPU has evolved well beyond its roots. Its architecture, built around multiple cores, makes it exceptionally good at running many operations simultaneously. While it still powers the visuals in your favorite games, it's now equally at home in machine learning, scientific computing, and AI data processing, where that parallel processing power is exactly what's needed.

What CPUs and GPUs have in common

Despite their differences, a CPUs and GPUs share some fundamental building blocks.

Cores

Both processors use several cores to execute tasks. Early processors had just one core; today, both CPUs and GPUs are multi-core by design. The main difference is scale. CPUs typically have a handful of powerful cores optimized for speed, while GPUs pack in thousands of smaller ones built for parallel work.

Memory and cache

Both units rely on fast-access memory to avoid bottlenecks. CPUs use a tiered cache system of L1, L2, and L3: each offering different speeds and capacities. GPUs use a parallel memory architecture designed to feed their multiple cores simultaneously. Either way, the goal is the same: get data to the processor as fast as possible.

Control units

Both CPUs and GPUs have a control unit that coordinates how instructions are fetched and executed. Think of it as the conductor keeping everything in sync. Clock speed plays a role here, as faster processors handle more operations per second, but the architecture around that control unit is what shapes how each processor performs in practice.

How CPUs and GPUs differ

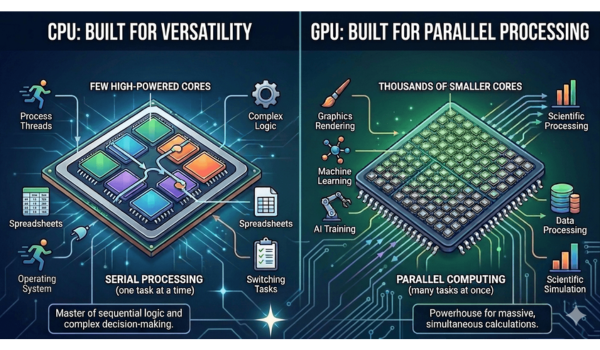

While CPUs and GPUs share some foundational components, they are engineered for fundamentally different types of work. The primary distinction lies in how they process instructions: one is a master of sequential logic and complex decision-making, while the other is a powerhouse for massive, simultaneous calculations.

The central processing unit: Built for versatility

The CPU is the backbone of any computer. It's the processor responsible for managing the overall flow of tasks, from running your operating system to handling complex logic. It's designed for versatility: a relatively small number of powerful cores that can switch between very different kinds of work quickly and efficiently.

A GPU doesn't replace a CPU; they work alongside each other. While the CPU handles the main program, the GPU takes on the repetitive, parallel-friendly workloads, freeing the CPU to focus on what it does best.

The GPU: Built for parallel processing

Where a CPU has a handful of cores, a GPU has thousands of cores, also known as arithmetic logic units. Each one is smaller and less powerful individually, but they are formidable in combination. Instead of tackling tasks one after another, a GPU utilizes parallel computing to break work into pieces and process them all at once. This makes them exceptionally efficient at the kinds of large-scale numerical operations that would bottleneck a CPU, from rendering graphics to training AI models.

Key differences at a glance

Cores and architecture: CPUs prioritize per-core performance with a small number of high-powered cores. GPUs prioritize throughput with thousands of smaller cores optimized for parallel work.

Speed and specialization: CPUs are flexible but slower at parallel tasks. GPUs are faster for workloads that can be broken into simultaneous operations.

Use cases: CPUs handle databases, operating systems, and general computing. GPUs handle graphics rendering, machine learning, scientific computing, and data processing.

-

Parallelism CPUs offer limited parallelism. GPUs are built for it.

Real-world examples: CPU and GPU power at work

Video rendering and content creation: GPUs accelerate video transcoding, format conversion, and real-time rendering, now more relevant than ever as demand for high-resolution and AI-enhanced video content continues to grow across streaming, gaming, and virtual production pipelines.

-

AI and data processing: GPUs power the matrix math behind machine learning, AI model training, and inference at scale. In 2026, this is their dominant use case, with dedicated AI accelerators like Nvidia's H100 and B200 series driving the bulk of enterprise and cloud GPU demand.

Scientific and cloud computing: From drug discovery to climate modeling, GPUs handle the kind of large-scale parallel processing tasks that define modern HPC workloads, increasingly delivered through cloud-based GPU instances rather than on-premise hardware.

Autonomous systems and robotics: GPUs process real-time sensor data, object detection, and navigation algorithms in autonomous vehicles, drones, and industrial robots.

Cryptocurrency mining: Once a major GPU use case, crypto mining has largely shifted to ASICs and other specialized hardware, freeing GPU capacity for AI and compute-intensive workloads.

Conclusion

CPUs and GPUs have never been more complementary than they are today. As AI workloads grow heavier and computing demands more specialized, knowing which processor fits which task is a strategic calculation. Together, these cores form the foundation of modern computing, from the laptop on your desk to the data centers driving the next generation of AI.

Frequently Asked Questions

When should one consider upgrading to discrete GPUs instead of relying on an integrated GPU?

Upgrading to a discrete GPU is typically necessary when your workload exceeds the parallel processing capabilities of a CPU's integrated graphics. While integrated GPUs have become more capable for general productivity and light media consumption, they share system RAM and power limits with the CPU, which can create performance bottlenecks.

You should consider upgrading in the following scenarios:

High-end video production: If you are editing 4k or 8k video, or working with complex color grading and 3D effects, a discrete GPU provides the dedicated video memory (VRAM) and specialized cores needed for smooth playback and faster rendering.

Machine learning and AI development: Training models or running local LLMs requires massive parallel throughput. Discrete GPUs are essential for these tasks because they can handle thousands of simultaneous operations that would overwhelm an integrated chip.

3D modeling and CAD: Professional applications like AutoCAD, Blender, or 3ds Max rely on discrete hardware to render complex geometries and textures in real-time without lagging.

Performance gaming: To run modern, graphically intensive games at high resolutions or high frame rates, a discrete GPU is required to manage the heavy lifting of real-time ray tracing and texture mapping.

- Multiple high-resolution displays: if your setup involves three or more monitors or several 4K displays, a discrete GPU offers better multi-display management and dedicated ports to maintain crisp performance across all screens.

On the other hand, for those primarily engaged in simpler tasks like web browsing, the necessity of a discrete GPU may be minimal, and the capabilities of an integrated GPU could suffice. The key lies in understanding the specific performance requirements of your computing activities.

What is more important for PC gaming: CPU or GPU?

For modern PC gaming, the GPU is generally the more important component. Most contemporary titles are graphically intensive, meaning the GPU handles the heavy lifting of rendering textures, lighting, and complex visual effects. If your GPU is too weak, you will experience low frame rates and stuttering, regardless of how powerful your CPU is.

However, the CPU still plays a vital role. It manages game logic, physics, AI behavior, and input processing. A weak CPU can create a bottleneck, preventing even a high-end GPU from reaching its full potential.

In short, while you should prioritize a powerful GPU for the best visual performance, you must ensure your CPU is capable enough to keep up with the data demands of your graphics card.

What does MMU stand for?

The memory management unit (MMU) is necessary for organizing memory and caching operations. It's almost always integrated directly into the central processing unit. It acts as a mediator during the fetch-decode-execute cycle, shuttling data between the CPU and RAM as required. Thus, MMU facilitates improved overall system performance through efficient communication between the CPU and memory.

(1).png)

![17 Maven Commands and Options [Cheat Sheet]](/static_content/vpsserver_com_frontend/img/maven-commands.png)

.png)